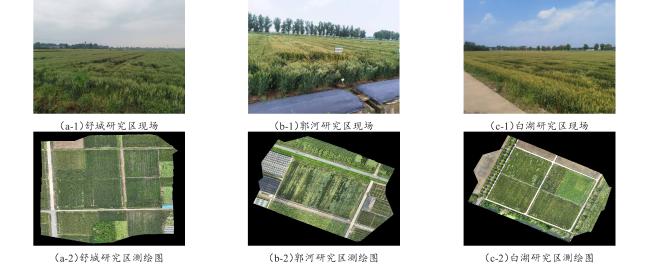

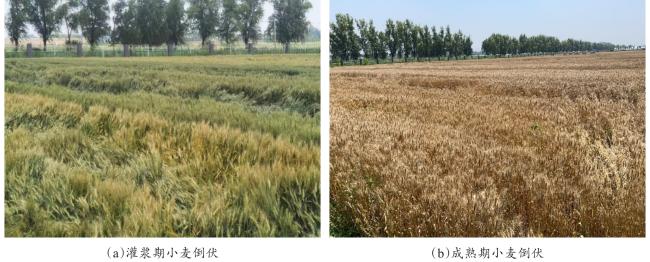

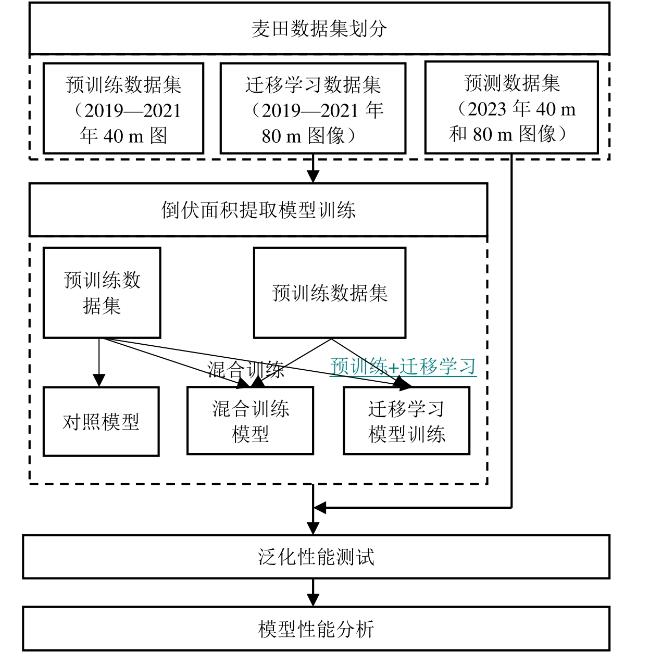

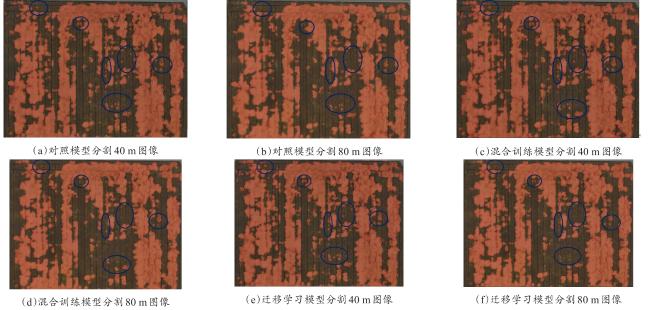

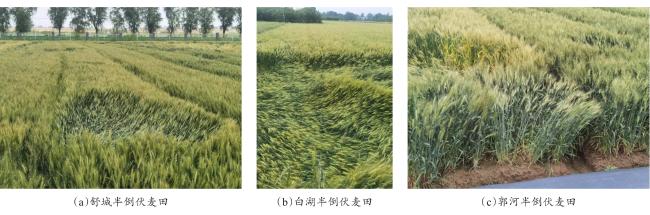

[Objective] Lodging constitutes a severe crop-related catastrophe, resulting in a reduction in photosynthesis intensity, diminished nutrient absorption efficiency, diminished crop yield, and compromised crop quality. The utilization of unmanned aerial vehicles (UAV) to acquire agricultural remote sensing imagery, despite providing high-resolution details and clear indications of crop lodging, encounters limitations related to the size of the study area and the duration of the specific growth stages of the plants. This limitation hinders the acquisition of an adequate quantity of low-altitude remote sensing images of wheat fields, thereby detrimentally affecting the performance of the monitoring model. The aim of this study is to explore a method for precise segmentation of lodging areas in limited crop growth periods and research areas. [Methods] Compared to the images captured at lower flight altitudes, the images taken by UAVs at higher altitudes cover a larger area. Consequently, for the same area, the number of images taken by UAVs at higher altitudes is fewer than those taken at lower altitudes. However, the training of deep learning models requires huge amount supply of images. To make up the issue of insufficient quantity of high-altitude UAV-acquired images for the training of the lodging area monitoring model, a transfer learning strategy was proposed. In order to verify the effectiveness of the transfer learning strategy, based on the Swin-Transformer framework, the control model, hybrid training model and transfer learning training model were obtained by training UAV images in 4 years (2019, 2020, 2021, 2023)and 3 study areas(Shucheng, Guohe, Baihe) under 2 flight altitudes (40 and 80 m). To test the model's performance, a comparative experimental approach was adopted to assess the accuracy of the three models for segmenting 80 m altitude images. The assessment relied on five metrics: intersection of union (IoU), accuracy, precision, recall, and F1-score. [Results and Discussions] The transfer learning model shows the highest accuracy in lodging area detection. Specifically, the mean IoU, accuracy, precision, recall, and F1-score achieved 85.37%, 94.98%, 91.30%, 92.52% and 91.84%, respectively. Notably, the accuracy of lodging area detection for images acquired at a 40 m altitude surpassed that of images captured at an 80 m altitude when employing a training dataset composed solely of images obtained at the 40 m altitude. However, when adopting mixed training and transfer learning strategies and augmenting the training dataset with images acquired at an 80 m altitude, the accuracy of lodging area detection for 80 m altitude images improved, inspite of the expense of reduced accuracy for 40 m altitude images. The performance of the mixed training model and the transfer learning model in lodging area detection for both 40 and 80 m altitude images exhibited close correspondence. In a cross-study area comparison of the mean values of model evaluation indices, lodging area detection accuracy was slightly higher for images obtained in Baihu area compared to Shucheng area, while accuracy for images acquired in Shucheng surpassed that of Guohe. These variations could be attributed to the diverse wheat varieties cultivated in Guohe area through drill seeding. The high planting density of wheat in Guohe resulted in substantial lodging areas, accounting for 64.99% during the late mature period. The prevalence of semi-lodging wheat further exacerbated the issue, potentially leading to misidentification of non-lodging areas. Consequently, this led to a reduction in the recall rate (mean recall for Guohe images was 89.77%, which was 4.88% and 3.57% lower than that for Baihu and Shucheng, respectively) and IoU (mean IoU for Guohe images was 80.38%, which was 8.80% and 3.94% lower than that for Baihu and Shucheng, respectively). Additionally, the accuracy, precision, and F1-score for Guohe were also lower compared to Baihu and Shucheng. [Conclusions] This study inspected the efficacy of a strategy aimed at reducing the challenges associated with the insufficient number of high-altitude images for semantic segmentation model training. By pre-training the semantic segmentation model with low-altitude images and subsequently employing high-altitude images for transfer learning, improvements of 1.08% to 3.19% were achieved in mean IoU, accuracy, precision, recall, and F1-score, alongside a notable mean weighted frame rate enhancement of 555.23 fps/m2. The approach proposed in this study holds promise for improving lodging monitoring accuracy and the speed of image segmentation. In practical applications, it is feasible to leverage a substantial quantity of 40 m altitude UAV images collected from diverse study areas including various wheat varieties for pre-training purposes. Subsequently, a limited set of 80 m altitude images acquired in specific study areas can be employed for transfer learning, facilitating the development of a targeted lodging detection model. Future research will explore the utilization of UAV images captured at even higher flight altitudes for further enhancing lodging area detection efficiency.