0 Introduction

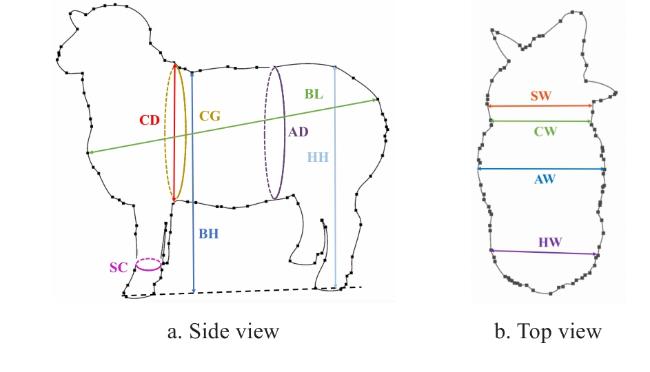

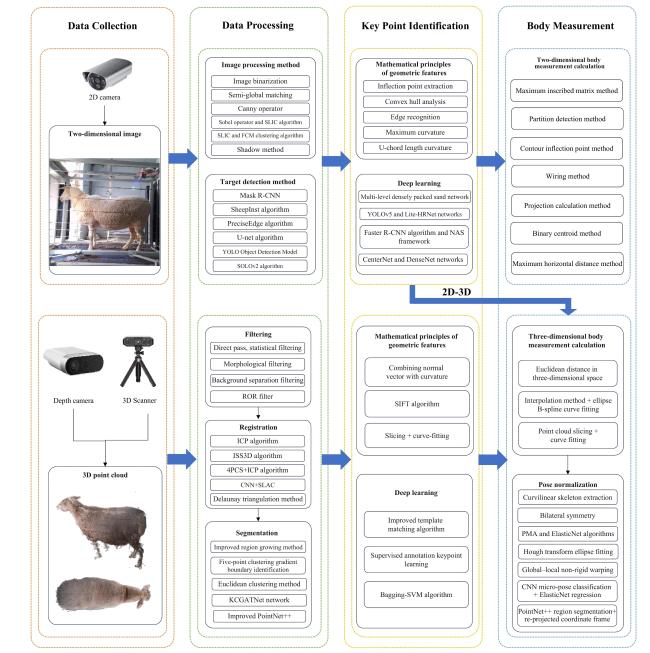

1 Method for measuring sheep body size based on computer vision

Fig. 1 Sheep body measurement diagram |

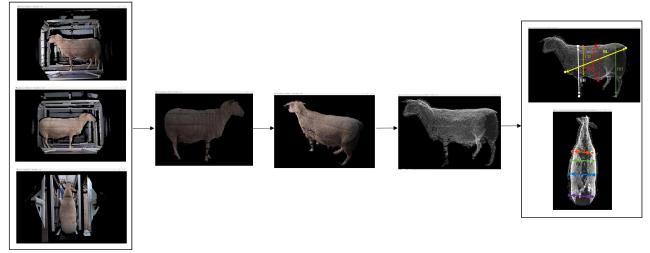

Fig. 2 Overview diagram of sheep body measurement techniques |

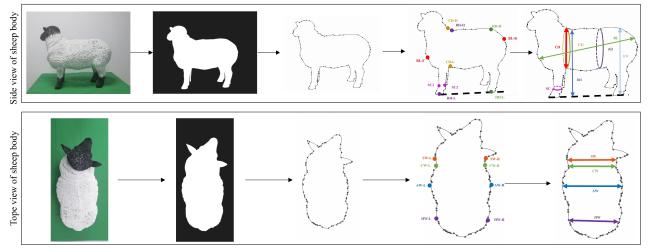

2 Two-dimensional (2D) image-based body size measurement

Fig. 3 2D image sheep body measurement processa. Raw image b. Background denoising c. Contour extraction d. Key point identification e. Body measurement |

2.1 Processing of 2D images

Table 1 image-based body size measurement methods: applications in sheep and reference cases in livestock |

| Subjects | Camera setup | Image algorithms | Individual number | Traits & accuracy | Main limitations | Year | Work |

|---|---|---|---|---|---|---|---|

| Sheep | Logitech C920 hdpro webcam | Partial least squares algorithm | 27 | BL: 3.5%; BH: 3.5%; CD: 3.5% | Colour-contrast sensitive | 2014 | [3] |

| Sheep | CCD wide-angle camera | Background difference method | NA | BL: 2%; BH: 2% | Cluttered background leaks | 2014 | [4] |

| Sheep | MV-EM120C | SLIC + FCM clustering | 27 | BL: 2.03%; BH: 1.13%; CD: 4.45%; CW: 2.25%; HH: 1.54%; HW: 2.41% | Cluster number must be tuned | 2018 | [5] |

| Pig | Kinect v1 (RGB) | Semi-global matching + image subtraction | 200 | BL, BH, HH, HW, SW: < 2.83 % | Depth not used; Lighting sensitive | 2019 | [6] |

| Cow | Single RGB | Canny algorithm | NA | BL: 0.06%; BH: 2.28% | Edge gaps need manual repair | 2020 | [7] |

| Yak | Single RGB | Sobel operator + SLIC | 33 | BH: 1.95%; BL: 3.11%; CD: 4.91% | K-value manually tuned | 2021 | [8] |

| Cattle | Azure Kinect DK | Shadow aberration method + Watershed segmentation | 34 | BL: 2.7 cm; BH: 2.07 cm; AW: 1.47 cm | High-contrast uniform background required | 2022 | [9] |

| Sheep | Single RGB | PreciseEdge | 75 | BL: r=0.943; BH: r=0.931; CG: r=0.893 | High-contrast uniform background required | 2022 | [10] |

| Sheep, Cattle | Intel RealSense D415 | U-Net | 55 | BL: 2.42 %; BH: 1.86 %; HH: 2.07 %; CD: 2.72 % | Heavy model, embedded-unfriendly | 2022 | [11] |

| Cattle | Hikvision RGB | SOLOv2 | 200 | BL: 1.36 %; BH: 0.44 %; CD: 2.05 %; CW: 2.80 % | Extreme-pose drift | 2022 | [12] |

| Cattle | Smartphone RGB | YOLOv5s+Lite-HRNet | 30 | BL: 7.55%; BH: 6.75%; CD: 8.00%; SC: 8.97% | Key-point occlusion drift | 2024 | [13] |

| Cattle | RealSense D455 | Improved Mask2former | 137 | BH: 4.32%; HH: 3.71%; BL: 5.58%; SC: 6.25% | High posture sensitivity, large errors in girth measurements | 2024 | [14] |

|

2.2 Keypoint recognition for 2D sheep body size measurement

2.2.1 Geometric feature-based keypoint recognition

Table 2 Comparison of geometric feature extraction methods for sheep body size measurement |

| Subjects | Method | Principle | Time complexity | Advantage | Disadvantage | Work |

|---|---|---|---|---|---|---|

| Sheep | Corner (inflection-point) detection | Compute curvature or angle discontinuities along the contour | O(n) | Very fast; accurate localization | Noise-sensitive | [22] |

| Sheep | Convex-hull analysis | Compute the convex hull of a point set and retain its vertices | O(n log n) | Parameter-free; fast execution | Keeps only "most convex" points; loses concavities | [23] |

| Sheep | Edge detection | Detect gray-level discontinuities via gradient/entropy/filtering | O(n) | Highly general-purpose | Fragile edges; post-processing required | [24] |

| Sheep | Maximum-curvature extraction | Locate curvature extrema along the edge | O(n) | Mathematically well-defined | Requires smoothing; prone to burrs | [5] |

| Sheep | Region-based U-chord curvature | Compute curvature with a fixed-chord sliding window | O(n) | Balances local & global shape | Extra chord-length parameter to tune | [16] |

2.2.2 Deep learning-based keypoint recognition

Table 3 Comparison of deep learning-based keypoint detection methods for sheep and related livestock body size measurement |

| Subjects | Method | Principle | Advantage | Disadvantage | Work |

|---|---|---|---|---|---|

| Sheep, Cattle | Multi-stage dense hourglass | Multi-scale fusion with iterative up/down-sampling for end-to-end key-point detection | Highest localization accuracy and strong robustness to pose and coat variations | Large parameter count, high GPU memory usage, slow inference speed | [11] |

| Cattle | YOLOv5s +Lite high-resolution network(Lite-HRNet) | YOLOv5s proposes regions; Lite-HRNet refines keypoints | Excellent speed-accuracy trade-off, lightweight design, and suitable for edge deployment | Relies on detection box quality; key points tend to drift under dense occlusion | [13] |

| Pig | Faster R-CNN +Neural Architecture Search (NAS) | Faster R-CNN defines the search region; NAS refines the keypoints | High detection accuracy and strong generalizability | Slowest inference speed, difficult to meet real-time requirements, and long training and hyper-parameter tuning cycles | [25] |

2.3 Body size parameter calculation

2.3.1 Body length

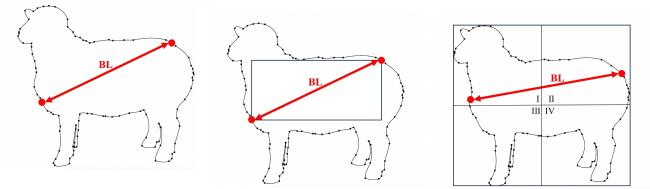

Fig. 4 Body length measurement methods of sheepa. Overall contour point selection method b. Maximum inscribed matrix method c. Zone detection method |

2.3.2 Body height

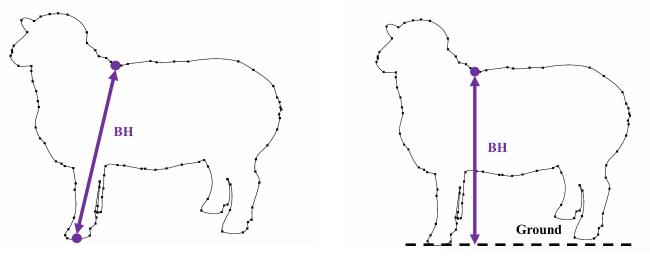

Fig. 5 Body height measurement method of sheepa. Withers-to-left-fore-hoof connecting method b. Perpendicular distance from the withers point to the line connecting the fore and hind hooves |

2.3.3 Hip height

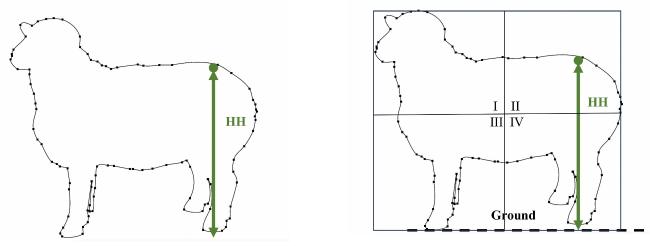

Fig. 6 Hip height measurement method of sheepa. Projection calculation method b. Vertical distance from hip-height point to the hoof line |

2.3.4 Chest depth

2.3.5 Chest width

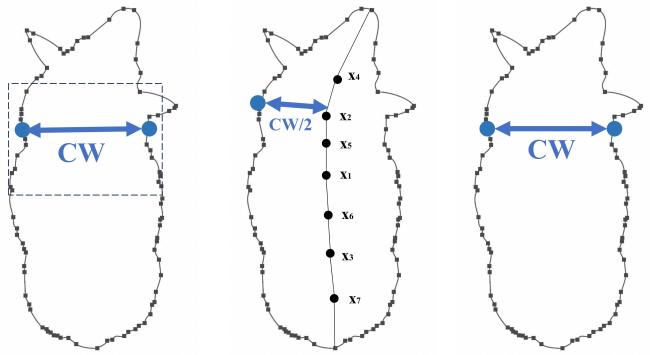

Fig. 7 Chest width measurement methods of sheepa. Contour inflection point calculation method b. Binary centroid method c. Horizontal distance maximum method |

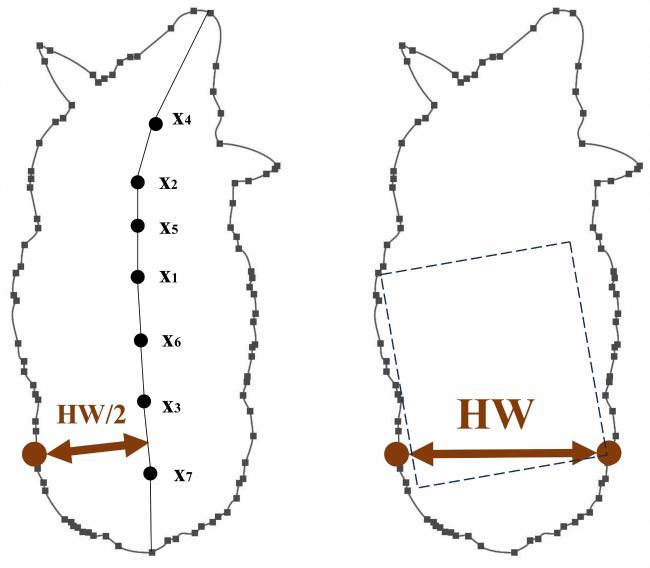

2.3.6 Hip width

Fig. 8 Hip width measurement methods of sheepa. Binary centroid method b. Maximum inscribed rectangle method |

3 Three-dimensional (3D) point cloud-based body size measurement

Fig. 9 Three-dimensional point cloud body size measurement process of sheepa. Original point cloud b. Filtering c. Registration d. Point cloud simplification e. Body size measurement |

Table 4 Comparison of 3D point cloud methods for sheep and related livestock body size measurement |

| Subjects | Camera type | Algorithms | Animal Numbers | Body traits & accuracy | Year | Work |

|---|---|---|---|---|---|---|

| Sheep | REVscan_3D | Octary tree+ Delaunay triangulation | 1 | BL / BH / CW / HH / HW: 1.01 % | 2019 | [32] |

| Cow | Binocular Vision | Scale-invariant feature transform(SIFT) Algorithms | 20 | BL:1.14%; BH:1.57%; SW:2.24% | 2020 | [33] |

| Sheep | TOF 3D camera | Principal component analysis Random sampling consistency algorithm Improved regional growth method | 1 | BL / BH / CD / HH: 2.36 % | 2020 | [34] |

| Cattle | Kinect v2 | Background subtraction, ROR Algorithms, Iterative Closest Point (ICP), ISS3D | 103 | BL / BH / CD / HH: <3 % | 2020 | [35] |

| Pig | Intel RealSense D720 | Depthwise Separable Convolution | 239 | BL: 0.75 cm; HW: 0.38 cm; BH: 1.23 cm; SW: 0.33 cm; HH: 0.66 cm | 2021 | [31] |

| Cattle | Kinect DK | Five-point clustering gradient boundary recognition algorithm | 10 | BL: 2.3%; BH: 2.8%; CG: 2.6%; AD: 2.8%; SW: 1.6% | 2022 | [36] |

| Pig | Kinect v2 | Variance classification algorithms | 50 | BL: 0.7%; BH: 1.8%; SW: 3.3% | 2022 | [37] |

| Pig | RealSense L515 | DeepLabCut+EfficientNet-b6 | 12 | BL / BH / HH / HW / SW: 1.79 cm | 2023 | [38] |

| Pig | NA | Improved PointNet++ | 25 | BL: 2.57%; BH: 2.18%; HH: 2.28%; SW: 4.56%; CG: 2.50%; AD: 3.14% | 2023 | [39] |

| Sheep | Kinect v2 | ICP,pass-through filtering, statistical filtering, RANSAC | 2 | BL / BH / HH / HW: <5 % | 2024 | [40] |

| Sheep | MV-EM120C GigE | YOLOv11n-Pose, CNN, ElasticNet | 51 | BL: 3.11%; BH: 1.93%; CW: 3.38%; CD: 2.52% | 2025 | [41] |

| Sheep | KinectV2 | PointNet++ | 24 | BH: 1.67%; CW: 3.63%;HH: 1.14%; BL: 2.71%; CG: 3.57%; HW: 3.71% | 2025 | [42] |

| Sheep | Kinect DK | Improved PointNet++, CPD | 120 | BL: 3.34%; BH: 3.07%; HH: 3.32%; CD: 3.63%; CG: 2.81% | 2025 | [43] |

|

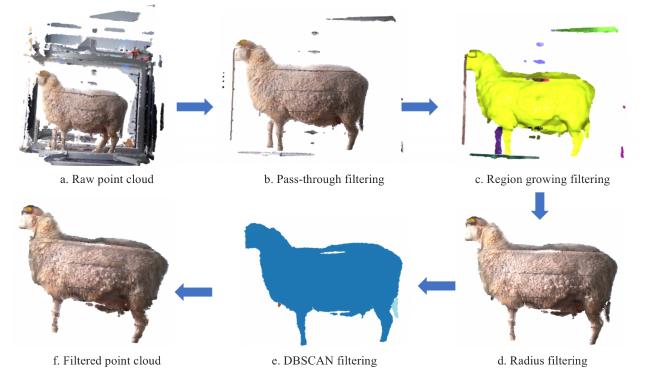

3.1 3D point cloud filtering

Fig. 10 Multi-algorithm fusion filtering process diagram of sheep |

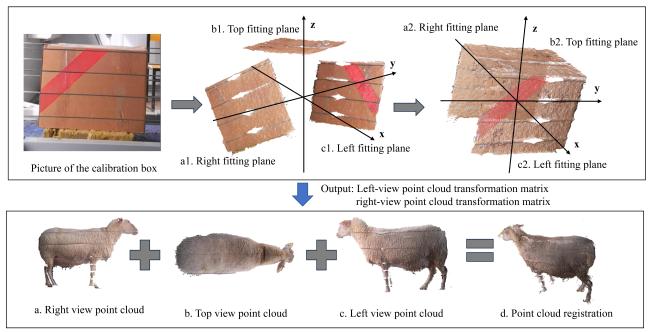

3.2 3D point cloud reconstruction

Fig. 11 Coarse registration of sheep point cloud using a calibration box |

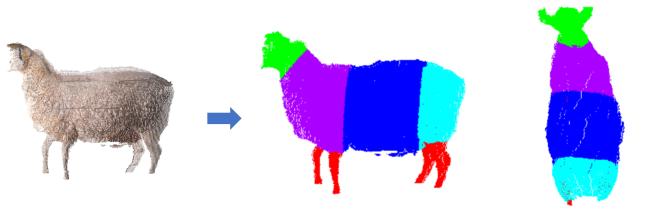

3.3 3D point cloud segmentation

Fig. 12 Schematic of sheep body point cloud segmentation for circumference measurementa. Point cloud registration b. Front view after segmentation c. Top view after segmentation |

3.3.1 Traditional geometric feature-based segmentation of sheep body parts

3.3.2 Deep learning-based segmentation of sheep body

3.4 Keypoint detection and recognition for 3D sheep body size measurement

3.4.1 Features geometric feature-based keypoint recognition for sheep body

Table 5 Comparison of key-point methods based on artificially constructed geometric features of sheep study |

| Subjects | Method | Principle | Advantages | Limitations | Work |

|---|---|---|---|---|---|

| Sheep | Normal-vector and curvature fusion simplification algorithm | Simplify point cloud by combining normal vectors and curvature, automatically extract body measurement points on cow back | Preserves key feature points, offers high extraction accuracy, and adapts to various postures | Relies heavily on point cloud quality and pre-processing, high computational complexity | [53] |

| Sheep | Spatial distribution statistical method | Based on spatial distribution features of point cloud, set thresholds through pass through filtering, combined with extremum method and dimensionality reduction projection to locate measurement points | High computational-efficiency, real-time-capability, robust-to wool-thickness-interference | Dependent-on fixed-postures and prior spatial-proportion-knowledge, limited breed/growth-stage adaptability, sensitive-to outlier point clouds | [54] |

3.4.2 Deep learning-based keypoint detection for sheep body

3.5 Sheep posture normalization

Table 6 Comparison of sheep pose normalization methods |

| Subjects | Method | Pose-handling strategy | Advantages | Limitations | Work |

|---|---|---|---|---|---|

| Sheep | Global-local non-rigid warping | Use dynamic position encoding with a similarity transformation group to obtain the pose, followed by template alignment | No reliance on strict symmetry or complete point clouds, enabling non-rigid pose recovery | Heavy computation; template library required | [57] |

| Sheep | CNN micro-pose classification + ElasticNet regression | An error-correction model based on CNN micro-pose classification and ElasticNet regression | Lightweight deployment | Still sensitive to resolution/angle; extreme poses fail | [41] |

| Sheep | PointNet++ region segmentation and re-projected coordinate frame | Multi-view local pose normalization, PointNet++ segmentation, re-projected coordinate frame | Suppresses drifting landmarks under sudden poses | Relies solely on geometric coordinates without fusing RGB or other modalities, resulting in limited discriminative power under occlusion or specular reflection | [42] |

3.6 Sheep body size parameter calculation

3.6.1 Body length

3.6.2 Body height

3.6.3 Shank circumference

Table 7 The relationship between body weight and body size of sheep |

| Animal numbers | Body size indicators | Number of indicators | Year | Work |

|---|---|---|---|---|

| 882 | BL, BH, CG, SC, HH, HW, etc. | 7 | 2018 | [63] |

| 94 | BL, BH, CG, SC | 4 | 2018 | [64] |

| 50 | BL, BH, CD, CG, CW, SC, HW, etc. | 9 | 2019 | [65] |

| 706 | BL, BH, CG, SC | 4 | 2020 | [66] |

| 145 | BL, BH, CD, CG, CW, HW | 6 | 2020 | [67] |

| 32 | BL, BH, CD, CG, CW, SC | 6 | 2021 | [68] |

| 136 | BL, BH, CD, CG, CW, SC, SH, HW | 8 | 2021 | [69] |

| 745 | BL, BH, CD, CG, CW, SC, HH | 7 | 2021 | [70] |

| 653 | BL, BH, CD, CG, CW, HW | 6 | 2021 | [71] |

| 408 | BL, BL, BH, CD, CG, CW, SC, etc. | 10 | 2022 | [72] |

| 558 | BL, BH, CG | 3 | 2022 | [73] |

| 12 500 | BL, BH, CD, CG, CW, SC, HH, BW, etc. | 13 | 2022 | [74] |

| 507 | BL, BH, CG, etc. | 5 | 2022 | [75] |

| 56 | BL, BH, CG, SC, HH, HW | 6 | 2022 | [76] |

| 100 | BL, CG, HH, SH | 4 | 2022 | [77] |

| 334 | BL, BH, CG, SC | 4 | 2023 | [78] |

| 1 289 | BL, BH, CD, CG, CW, SC | 6 | 2023 | [58] |

| 150 | BL, CG, SH | 3 | 2023 | [79] |

| 210 | BL, CD, CG, CW, HH, BH、etc. | 7 | 2023 | [80] |

| 239 | BL, BH, CD, CG, CW, etc. | 6 | 2024 | [81] |

| 916 | BL, BH, CG, SC | 4 | 2024 | [82] |

| 239 | BL, BH, CG, CW, SC, etc. | 6 | 2024 | [83] |

| 100 | BL, CG, HH, HW, AD, SW, etc. | 14 | 2024 | [84] |

|

3.6.4 Hip height

3.6.5 Chest depth

3.6.6 Chest width

3.6.7 Hip width

3.6.8 Chest girth

4 2D-3D integrated livestock body size measurement

Table 8 Accuracy data comparison of 2D, 3D, and 2D-3D body measurement methods in sheep and other livestock |

| Subjects | Methods | Animal numbers | Body traits & accuracy | Year | Work |

|---|---|---|---|---|---|

| Sheep | 2D | 27 | BL: 2.03%; BH: 1.13%; CD: 4.45%; CW: 2.25%; HH:1.54%; HW: 2.41% | 2018 | [5] |

| Cattle | 2D | NA | BL: 0.06%; BH: 2.28% | 2020 | [7] |

| Sheep | 2D | 55 | BL: 2.42 %; BH: 1.86 %; HH: 2.07 %; CD: 2.72 % | 2022 | [11] |

| Cattle | 2D | 30 | BL: 7.55%; BH: 6.75%; CD: 8.00%; SC: 8.97% | 2024 | [13] |

| Sheep | 3D | 1 | BL / BH / CD / HH: 2.36% | 2020 | [34] |

| Cattle | 3D | 103 | BL/BH/CDP/HH: <3% | 2020 | [35] |

| Sheep | 3D | 239 | BL: 0.75 cm; HW: 0.38 cm; BH: 1.23 cm; SW: 0.33 cm; HH: 0.66 cm | 2021 | [31] |

| Cattle | 3D | 10 | BL: 2.3%; BH: 2.8%; CDM: 2.6%; AD: 2.8%; SW: 1.6% | 2022 | [36] |

| Sheep | 3D | 2 | BOL/BH/HH/HW: <5% | 2024 | [40] |

| Sheep | 3D | 24 | BH: 1.67%; CW: 3.63%; HH: 1.14%; BL: 2.71%; CDM: 3.57%; HW: 3.71% | 2025 | [42] |

| Cattle | 2D-3D | NA | BL: 2.14%; BH: 0.76%; HH: 0.76% | 2022 | [86] |

| Pig | 2D-3D | NA | BL: 2.33%; BH: 1.92%; SW: 1.29%; HW: 1.26% | 2021 | [87] |

| Horse | 2D-3D | 80 | BH/BL/HH/CG/AD: <2.29% | 2024 | [88] |

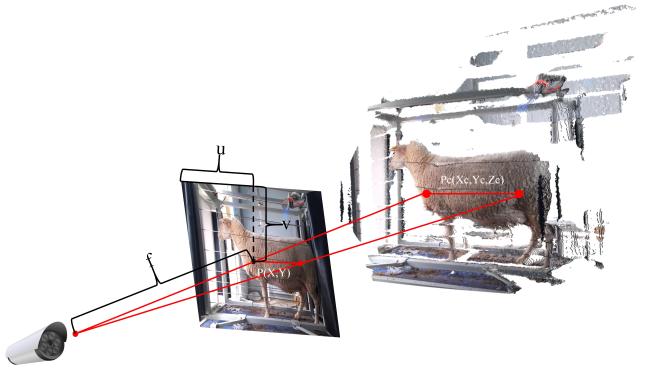

4.1 Integration framework and key technical workflow

Fig.13 Schematic diagram of 2D-to-3D keypoint projection for sheep body measurementcamera view point 2D pixel point 3D point |