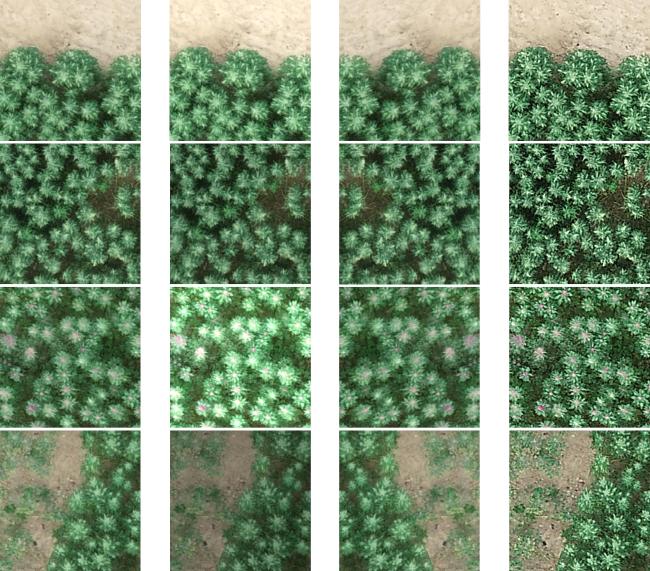

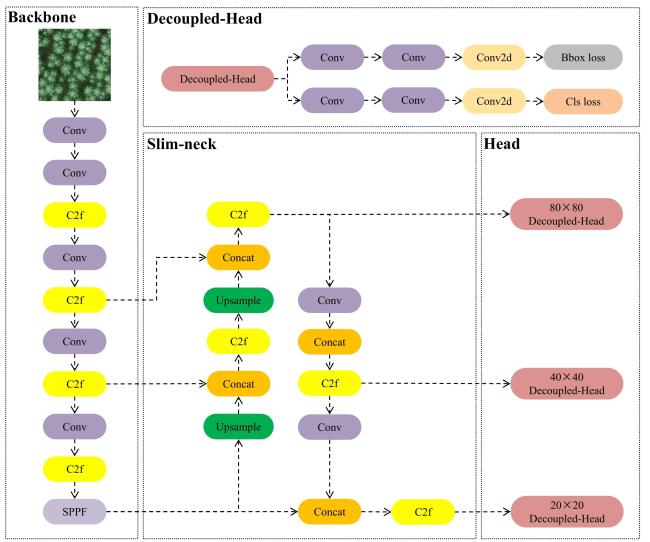

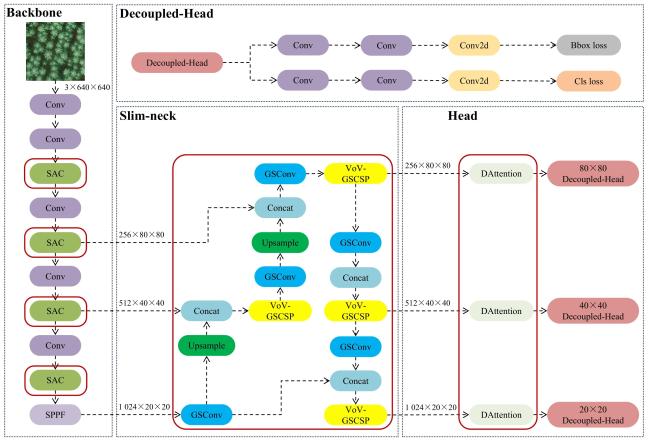

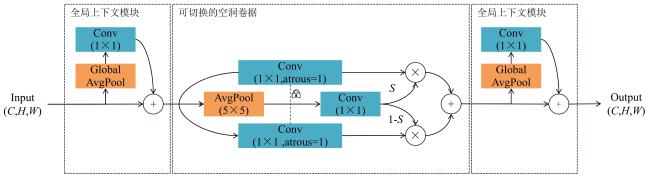

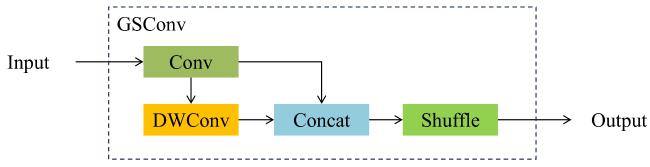

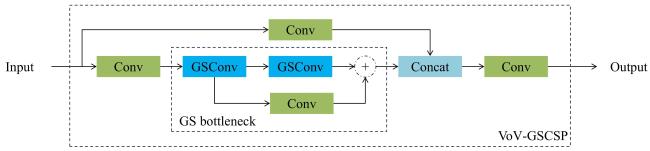

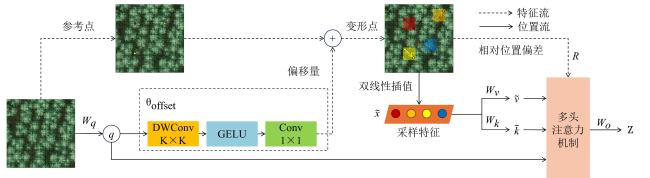

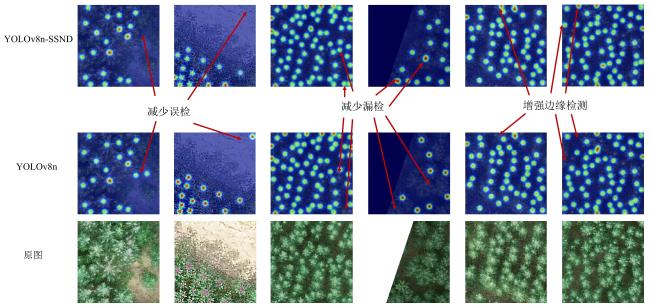

[Objective] The Chenopodium quinoa panicle is a critical phenotypic indicator for estimating crop yield and evaluating the growth condition of Chenopodium quinoa plants. Accurate and efficient recognition of Chenopodium quinoa panicles in complex field environments is therefore of great significance for intelligent agriculture, yield prediction, and automatic crop management. However, unmanned aerial vehicle (UAV)-acquired field imagery often exhibits complex characteristics such as diverse panicle morphology, uneven illumination, overlapping occlusion, and background interference, et al., posing substantial challenges for conventional target detection algorithms. To address these issues, a lightweight target detection model, named YOLOv8n-SSND (YOLOv8n with Switchable Atrous Convolution, Slim Neck, and Deformable Attention) is proposed, and specifically optimized for UAV-based Chenopodium quinoa panicle identification to improve the detection accuracy and inference efficiency for Chenopodium quinoa panicles while maintaining low computational cost and real-time performance suitable for embedded UAV deployment. [Methods] The proposed model was constructed based on the YOLOv8n and YOLOv11n frameworks, and incorporated several improvements tailored for small-object agricultural detection tasks. To enhance the ability to capture multi-scale and high-dimensional semantic features, the switchable atrous convolution (SAC) module was embedded into the backbone network. This module dynamically adjusted its receptive field according to spatial context, enabling more precise extraction of local and global texture details of Chenopodium quinoa panicles. In order to reduce redundant parameters and maintain high computational efficiency, a slim-neck lightweight feature fusion layer was designed, which effectively strengthened the integration of shallow spatial information and deep semantic features, allowing the network to maintain high accuracy without increasing model complexity. Additionally, a deformable attention (DA) mechanism was introduced to enable adaptive focus on regions with rich panicle-related features while suppressing irrelevant background noise. This attention mechanism assigned dynamic weights across both spatial and channel dimensions, improving the model's robustness against occlusions, illumination variations, and complex field textures commonly encountered in UAV images. [Results and Discussions] Comprehensive field experiments were conducted using UAV images of Chenopodium quinoa plots collected under different environmental conditions and growth stages. The results demonstrated that the proposed YOLOv8n-SSND model achieved a mean average precision (mAP50) of 94.3%, showing a remarkable improvement over multiple baseline and comparative models. Specifically, compared with YOLOv11n-SSND, YOLOv11n, YOLOv12n, YOLOv7, YOLOv5s, single shot multibox detector (SSD), fast region-based convolutional neural network (Fast R-CNN) and YOLOv8n, the proposed model achieved improvements of 0.7, 0.9, 2.1, 1.4, 2.0, 23.1, 19.6 and 1.8 percentage points respectively (SSD and Fast R-CNN). In terms of computational efficiency, the inference speed reached 166.7 f/s, representing a 26.7% increase over the YOLOv8n baseline, which ensured real-time detection capability for UAV-mounted onboard processors. Moreover, the total operation count was reduced to 6.8 GFLOPs, reflecting a 16.0% reduction compared with the baseline model, thus demonstrating the improved efficiency of the proposed architecture. The experimental comparison also indicated that the integration of SAC enhanced the model's sensitivity to complex spatial patterns, while the DA module effectively improved feature selectivity and prevented overfitting to background textures. The Slim-Neck design contributed significantly to reducing parameter redundancy and facilitated smooth feature propagation across layers. [Conclusions] The YOLOv8n-SSND model effectively achieves a balance among detection accuracy, inference speed, and computational cost, making it well-suited for real-time UAV-based agricultural monitoring. The experimental outcomes confirm that the model not only provides high-precision detection of Chenopodium quinoa panicles but also offers superior inference efficiency with minimal computational resources. These characteristics make it a promising solution for UAV-deployed intelligent agricultural systems, where power and processing capacity are limited. Furthermore, the proposed method provides a technical foundation for large-scale and automated monitoring of Chenopodium quinoa growth, enabling accurate yield estimation, phenotypic analysis, and precision crop management.