0 Introduction

1 Materials and methods

1.1 Dataset

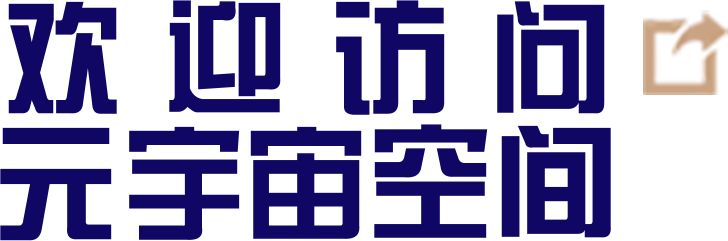

1.1.1 Data collection

Fig. 1 Data acquisition devices of FPE study |

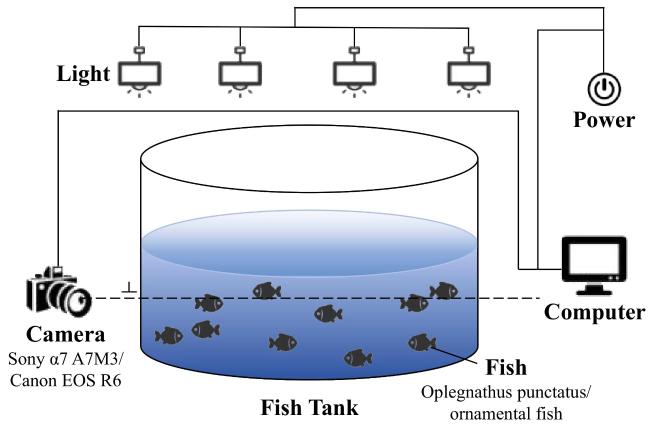

1.1.2 Data annotation

Table 1 The detailed description of the key point annotation of fish body |

| Number | Name | Location |

|---|---|---|

| 1 | belly | Anterior end of the pelvic fin |

| 2 | eye_left | Left eye |

| 3 | eye_right | Right eye |

| 4 | back | Anterior end of dorsal fin |

| 5 | tail_top | Anterior end of tail |

| 6 | tail_up | Top of tail |

| 7 | tail_low | Bottom of tail |

Fig. 2 The annotation sample of experimental dataset of fish body |

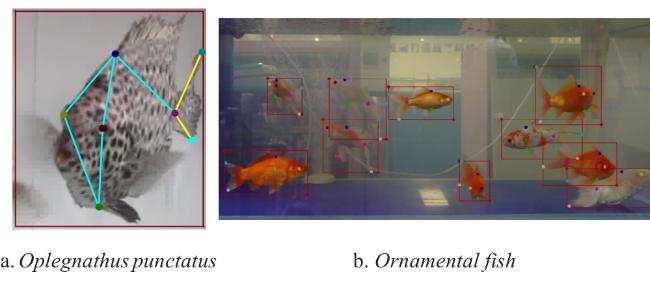

1.1.3 Data augmentation

Fig. 3 Sample images of oplegnathus punctatus during data augmentation |

Table 2 The amount and distribution of the proposed dataset |

| Dataset | Training set images | Test set images | Image total | Fish total |

|---|---|---|---|---|

| Oplegnathus punctatus images before augmentation | 400 | 100 | 500 | 800 |

| Oplegnathus punctatus images after augmentation | 800 | 100 | 900 | 1 800 |

| Ornamental fish images | 240 | 60 | 300 | 3 000 |

| Final dataset total | 1 040 | 160 | 1 200 | 4 800 |

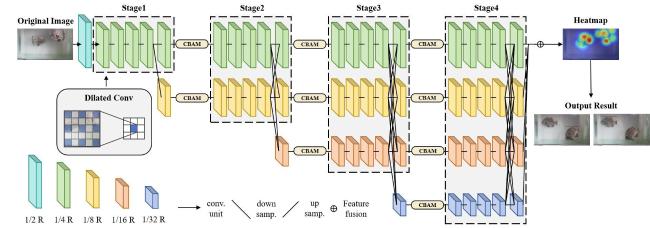

1.2 HPFPE

1.2.1 Framework

Fig. 4 The structure of HPFPE |

Table 3 Configuration of multi-scale feature maps produced by each stage of HPFPE study |

| Layer | Multi-scale feature map | Number of branches | Number of blocks in each branch | Number of exchange units |

|---|---|---|---|---|

| Stage 1 | 1/4 | 1 | 4 | 0 |

| Stage 2 | 1/4, 1/8 | 2 | 4, 4 | 1 |

| Stage 3 | 1/4, 1/8, 1/16 | 3 | 4, 4, 4 | 4 |

| Stage 4 | 1/4, 1/8, 1/16, 1/32 | 4 | 4, 4, 4, 4 | 3 |

1.2.2 CBAM module

1.2.3 Dilated convolution

1.2.4 Evaluation metrics

2 Experiment and results

2.1 Experimental configuration

Table 4 Experimental configuration of HPFPE study |

| Configuration | Parameter |

|---|---|

| CPU | Intel(R) Xeon(R) Silver 4210R CPU |

| GPU | NVIDIA Tesla V100 |

| Operating system | Ubuntu 18.04.6 LTS |

| Deep learning framework | PyTorch 1.8.1 |

| Programming language | Python 3.8 |

Table 5 Width of the Stage 2-Stage 4 in HRNet-32 and HRNet-48 |

| Number of stage | HRNet-32 | HRNet-48 |

|---|---|---|

| Stage 2 | 64 | 96 |

| Stage 3 | 128 | 192 |

| Stage 4 | 256 | 384 |

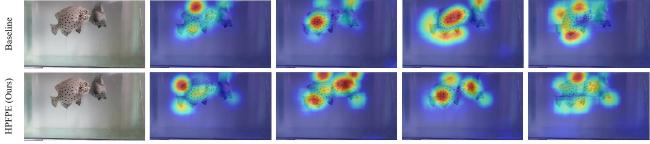

2.2 Comparison of the pose estimation results with the original HRNet

Table 6 Comparison of HPFPE with HRNet on oplegnathus punctatus data |

| Method | Backbone | Input size | AP/% | AP50/% | AP75/% | AR/% | AR50/% | AR75/% |

|---|---|---|---|---|---|---|---|---|

| HRNet | HRNet-W32 | 256×192 | 71.50 | 96.72 | 76.69 | 75.45 | 97.00 | 79.00 |

| HRNet-W32 | 384×288 | 72.70 | 96.95 | 78.77 | 76.35 | 97.50 | 81.00 | |

| HRNet-W48 | 256×192 | 71.15 | 94.53 | 77.39 | 75.25 | 95.50 | 80.50 | |

| HRNet-W48 | 384×288 | 72.84 | 96.66 | 80.74 | 76.60 | 97.00 | 82.50 | |

| HPFPE (Ours) | HRNet-W32 | 256×192 | 72.12 | 97.98 | 74.87 | 76.30 | 98.50 | 78.50 |

| HRNet-W32 | 384×288 | 74.05 | 99.01 | 75.82 | 77.85 | 99.50 | 80.00 | |

| HRNet-W48 | 256×192 | 72.91 | 96.90 | 78.13 | 76.65 | 97.00 | 80.50 | |

| HRNet-W48 | 384×288 | 74.12 | 97.89 | 81.99 | 77.60 | 98.00 | 84.00 |

Fig. 5 Heatmap comparison of HPFPE and baseline on oplegnathus punctatus dataa. Original image b. HRNet-W32/256×192 c. HRNet-W32/384×288 d. HRNet-W48/256×192 e. HRNet-W48/384×288 |

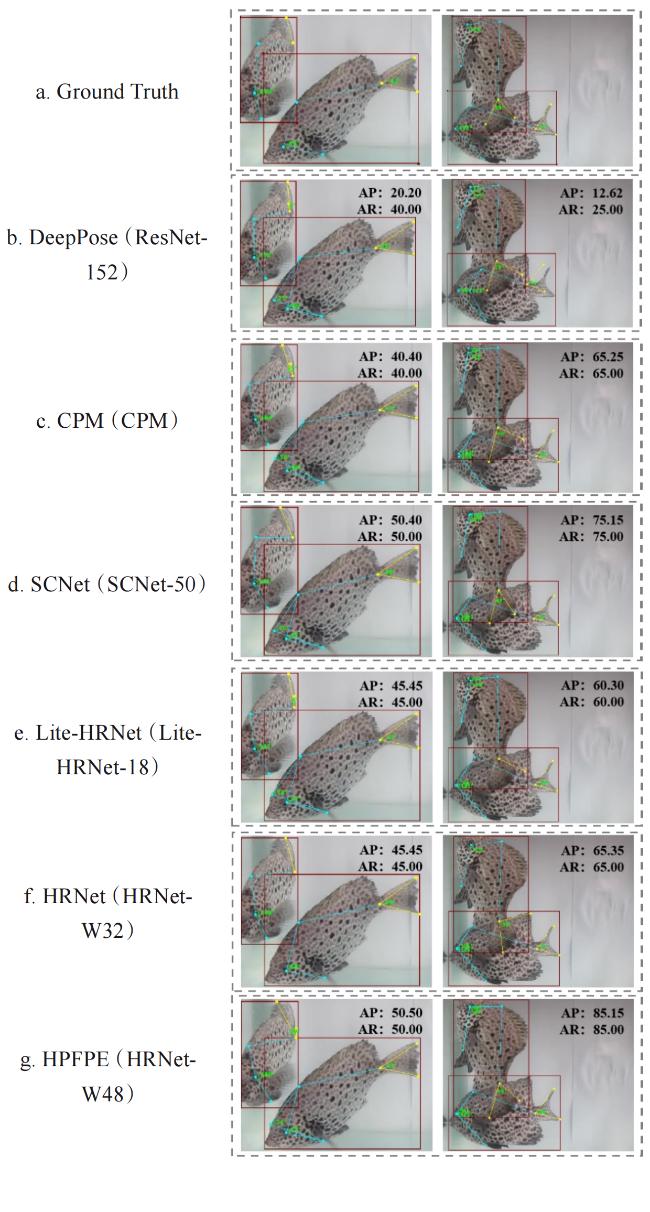

2.3 Comparison with other methods

Table 7 Comparison with other methods on oplegnathus punctatus data when input size is 256×192 |

| Method | Backbone | AP/% | AP50/% | AP75/% | AR/% | AR50/% | AR75/% |

|---|---|---|---|---|---|---|---|

| DeepPose | ResNet-50 | 42.24 | 83.66 | 36.89 | 57.30 | 90.00 | 58.50 |

| ResNet-101 | 41.46 | 85.08 | 35.71 | 57.05 | 91.00 | 58.00 | |

| ResNet-152 | 43.04 | 85.46 | 37.07 | 58.40 | 91.00 | 59.00 | |

| CPM | CPM | 62.22 | 93.53 | 67.25 | 66.45 | 95.00 | 71.00 |

| SCNet | SCNet-50 | 70.15 | 96.86 | 74.54 | 73.95 | 97.00 | 78.00 |

| SCNet-101 | 69.45 | 95.78 | 74.36 | 74.10 | 96.50 | 78.50 | |

| Lite-HRNet | Lite-HRNet-18 | 68.01 | 92.77 | 73.10 | 72.00 | 94.00 | 76.00 |

| Lite-HRNet-30 | 67.23 | 96.64 | 73.01 | 70.55 | 97.00 | 75.00 | |

| HPFPE(Ours) | HRNet-W32 | 72.12 | 97.98 | 74.87 | 76.30 | 98.50 | 78.50 |

| HRNet-W48 | 72.91 | 96.90 | 78.13 | 76.65 | 97.00 | 80.50 |

Table 8 Comparison with other methods on oplegnathus punctatus data when input size is 384×288 |

| Method | Backbone | AP/% | AP50/% | AP75/% | AR/% | AR50/% | AR75/% |

|---|---|---|---|---|---|---|---|

| CPM | CPM | 67.26 | 94.42 | 71.60 | 71.85 | 95.50 | 76.00 |

| SCNet | SCNet-50 | 71.62 | 96.82 | 75.35 | 75.20 | 97.50 | 78.50 |

| SCNet-101 | 71.67 | 96.82 | 77.94 | 75.60 | 97.50 | 81.00 | |

| Lite-HRNet | Lite-HRNet-18 | 69.96 | 97.76 | 72.71 | 73.60 | 98.00 | 76.50 |

| Lite-HRNet-30 | 70.01 | 93.64 | 75.72 | 73.75 | 95.00 | 78.00 | |

| HPFPE(Ours) | HRNet-W32 | 74.05 | 99.01 | 75.82 | 77.85 | 99.50 | 80.00 |

| HRNet-W48 | 74.12 | 97.89 | 81.99 | 77.60 | 98.00 | 84.00 |

Fig. 6 Visualization results of different methods on oplegnathus punctatus data |

2.4 Comparison of CBAM pose estimation results at different locations

Table 9 The oplegnathus punctatus data pose estimation results obtained by adding CBAM modules at different positions of HRNet |

| Method | Backbone | Input size | AP/% | AP50/% | AP75/% | AR/% | AR50/% | AR75/% |

|---|---|---|---|---|---|---|---|---|

| HRNet+CBAMfront | HRNet-W32 | 256×192 | 71.85 | 95.90 | 78.60 | 75.80 | 96.50 | 81.50 |

| HRNet-W32 | 384×288 | 73.46 | 96.88 | 79.33 | 76.75 | 97.50 | 82.00 | |

| HRNet-W48 | 256×192 | 71.37 | 96.93 | 76.45 | 75.15 | 97.50 | 80.50 | |

| HRNet-W48 | 384×288 | 73.23 | 96.66 | 77.90 | 76.45 | 97.00 | 80.50 | |

| HRNet+CBAMfuse | HRNet-W32 | 256×192 | 70.95 | 95.94 | 77.42 | 74.85 | 96.50 | 80.50 |

| HRNet-W32 | 384×288 | 71.93 | 95.78 | 75.41 | 76.10 | 96.50 | 78.50 | |

| HRNet-W48 | 256×192 | 71.09 | 96.81 | 77.20 | 75.75 | 97.00 | 81.00 | |

| HRNet-W48 | 384×288 | 69.61 | 96.96 | 73.95 | 73.05 | 97.00 | 76.50 | |

| HRNet+CBAMstage | HRNet-W32 | 256×192 | 71.94 | 93.83 | 80.43 | 75.90 | 95.00 | 82.50 |

| HRNet-W32 | 384×288 | 74.02 | 95.94 | 80.49 | 77.40 | 96.50 | 82.50 | |

| HRNet-W48 | 256×192 | 71.86 | 95.79 | 81.60 | 76.00 | 96.00 | 84.00 | |

| HRNet-W48 | 384×288 | 73.40 | 97.85 | 78.87 | 76.75 | 98.00 | 81.00 |

2.5 Comparison with other attention mechanisms

Table 10 Comparison with other attention mechanisms for pose estimation of CBAM |

| Attention mechanism | Backbone | Input size | AP/% | AP50/% | AP75/% | AR/% | AR50/% | AR75/% |

|---|---|---|---|---|---|---|---|---|

| SE[24] | HRNet-W32 | 256×192 | 71.53 | 97.89 | 77.95 | 76.30 | 98.00 | 81.00 |

| HRNet-W32 | 384×288 | 73.23 | 96.88 | 78.60 | 77.20 | 97.50 | 81.00 | |

| HRNet-W48 | 256×192 | 71.55 | 97.95 | 81.97 | 74.95 | 98.50 | 84.00 | |

| HRNet-W48 | 384×288 | 72.79 | 96.81 | 78.37 | 76.25 | 97.00 | 81.00 | |

| ECA[25] | HRNet-W32 | 256×192 | 71.59 | 96.95 | 76.18 | 75.30 | 97.50 | 79.50 |

| HRNet-W32 | 384×288 | 72.49 | 97.89 | 79.66 | 77.35 | 98.00 | 83.00 | |

| HRNet-W48 | 256×192 | 71.82 | 94.56 | 76.64 | 75.70 | 95.50 | 79.00 | |

| HRNet-W48 | 384×288 | 72.79 | 96.81 | 78.37 | 76.25 | 97.00 | 81.00 | |

| CA[26] | HRNet-W32 | 256×192 | 71.02 | 94.94 | 77.60 | 74.50 | 95.50 | 80.00 |

| HRNet-W32 | 384×288 | 73.03 | 97.95 | 77.97 | 75.75 | 98.00 | 80.00 | |

| HRNet-W48 | 256×192 | 70.55 | 94.97 | 77.74 | 74.40 | 95.50 | 80.50 | |

| HRNet-W48 | 384×288 | 72.82 | 97.86 | 77.39 | 76.75 | 98.00 | 80.00 | |

| LSKblock[27] | HRNet-W32 | 256×192 | 71.71 | 97.89 | 77.40 | 75.35 | 98.00 | 80.00 |

| HRNet-W32 | 384×288 | 72.26 | 96.93 | 77.37 | 75.55 | 97.50 | 79.00 | |

| HRNet-W48 | 256×192 | 71.83 | 95.85 | 78.14 | 75.75 | 96.50 | 81.50 | |

| HRNet-W48 | 384×288 | 71.89 | 95.95 | 76.91 | 75.00 | 96.00 | 79.00 | |

| CBAM[23] | HRNet-W32 | 256×192 | 71.94 | 93.83 | 80.43 | 75.90 | 95.00 | 82.50 |

| HRNet-W32 | 384×288 | 74.02 | 95.94 | 80.49 | 77.40 | 96.50 | 82.50 | |

| HRNet-W48 | 256×192 | 71.86 | 95.79 | 81.60 | 76.00 | 96.00 | 84.00 | |

| HRNet-W48 | 384×288 | 73.40 | 97.85 | 78.87 | 76.75 | 98.00 | 81.00 |

2.6 Ablation experiment

Table 11 Ablation experiments of HPFPE on oplegnathus punctatus data |

| Backbone | Input size | Dilated convolution | CBAM | AP/% | AP50/% | AP75/% | AR/% | AR50/% | AR75/% |

|---|---|---|---|---|---|---|---|---|---|

| HRNet-W32 | 256×192 | 71.50 | 96.72 | 76.69 | 75.45 | 97.00 | 79.00 | ||

| √ | 71.68 | 95.87 | 79.83 | 75.50 | 96.50 | 82.00 | |||

| √ | 71.94 | 93.83 | 80.43 | 75.90 | 95.00 | 82.50 | |||

| √ | √ | 72.12 | 97.98 | 74.87 | 76.30 | 98.50 | 78.50 | ||

| HRNet-W32 | 384×288 | 72.70 | 96.95 | 78.77 | 76.35 | 97.50 | 81.00 | ||

| √ | 73.09 | 96.95 | 78.29 | 76.95 | 97.50 | 80.50 | |||

| √ | 74.02 | 95.94 | 80.49 | 77.40 | 96.50 | 82.50 | |||

| √ | √ | 74.05 | 99.01 | 75.82 | 77.85 | 99.50 | 80.00 | ||

| HRNet-W48 | 256×192 | 71.15 | 94.53 | 77.39 | 75.25 | 95.50 | 80.50 | ||

| √ | 72.11 | 96.83 | 77.80 | 75.95 | 97.50 | 80.50 | |||

| √ | 71.86 | 95.79 | 81.60 | 76.00 | 96.00 | 84.00 | |||

| √ | √ | 72.91 | 96.90 | 78.13 | 76.65 | 97.00 | 80.50 | ||

| HRNet-W48 | 384×288 | 72.84 | 96.66 | 80.74 | 76.60 | 97.00 | 82.50 | ||

| √ | 73.49 | 96.89 | 79.16 | 77.15 | 97.00 | 82.00 | |||

| √ | 73.40 | 97.85 | 78.87 | 76.75 | 98.00 | 81.00 | |||

| √ | √ | 74.12 | 97.89 | 81.99 | 77.60 | 98.00 | 84.00 |

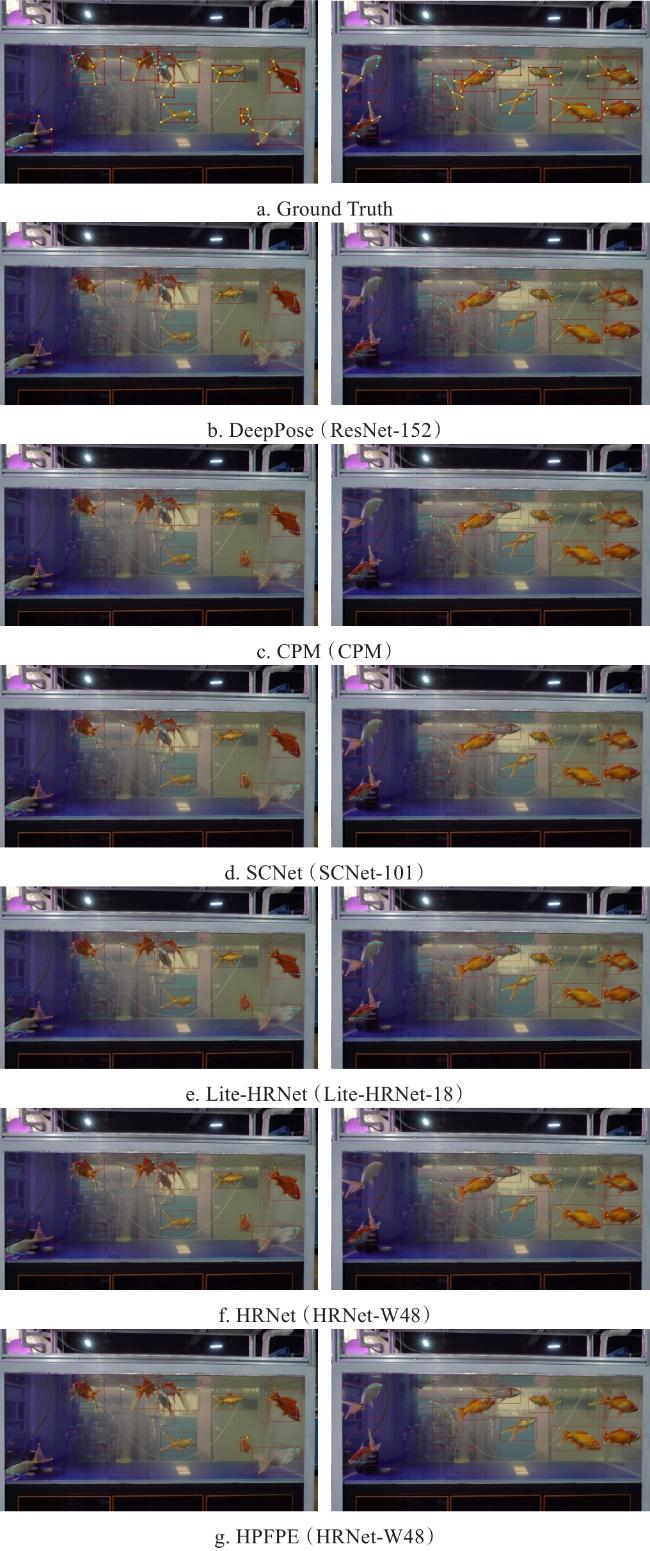

2.7 Comparison on ornamental fish data

Table 12 Comparison with five methods on ornamental fish data |

| Method | Backbone | Input size | AP/% | AP50/% | AP75/% | AR/% | AR50/% | AR75/% |

|---|---|---|---|---|---|---|---|---|

| DeepPose | ResNet-50 | 256×192 | 4.05 | 21.50 | 0.20 | 11.25 | 40.00 | 2.50 |

| ResNet-101 | 256×192 | 7.66 | 30.80 | 0.00 | 13.75 | 50.00 | 0.00 | |

| ResNet-152 | 256×192 | 8.48 | 32.07 | 0.08 | 16.00 | 50.00 | 2.50 | |

| CPM | CPM | 256×192 | 27.25 | 84.78 | 6.01 | 34.50 | 85.00 | 17.50 |

| CPM | 384×288 | 33.76 | 89.88 | 18.92 | 42.50 | 90.00 | 37.50 | |

| SCNet | SCNet-50 | 256×192 | 44.04 | 89.89 | 40.68 | 51.25 | 90.00 | 55.00 |

| SCNet-50 | 384×288 | 49.00 | 85.20 | 50.85 | 55.50 | 87.50 | 62.50 | |

| SCNet-101 | 256×192 | 47.20 | 94.06 | 44.68 | 54.25 | 95.00 | 55.00 | |

| SCNet-101 | 384×288 | 50.37 | 93.88 | 56.17 | 58.50 | 95.00 | 65.00 | |

| Lite-HRNet | Lite-HRNet-18 | 256×192 | 39.67 | 89.97 | 30.35 | 47.00 | 90.00 | 45.00 |

| Lite-HRNet-18 | 384×288 | 45.72 | 94.06 | 35.12 | 52.50 | 95.00 | 50.00 | |

| Lite-HRNet-30 | 256×192 | 39.82 | 80.55 | 41.12 | 46.75 | 82.50 | 55.00 | |

| Lite-HRNet-30 | 384×288 | 43.02 | 90.10 | 37.32 | 50.75 | 90.00 | 52.50 | |

| HRNet | HRNet-W32 | 256×192 | 47.63 | 92.03 | 40.77 | 55.50 | 92.50 | 55.00 |

| HRNet-W32 | 384×288 | 48.11 | 89.25 | 41.87 | 56.00 | 90.00 | 57.50 | |

| HRNet-W48 | 256×192 | 47.25 | 87.13 | 42.85 | 52.50 | 87.50 | 55.00 | |

| HRNet-W48 | 384×288 | 50.76 | 94.06 | 40.92 | 58.25 | 95.00 | 57.50 | |

| HPFPE(Ours) | HRNet-W32 | 256×192 | 47.88 | 92.03 | 33.28 | 54.25 | 92.50 | 50.00 |

| HRNet-W32 | 384×288 | 49.50 | 90.46 | 38.23 | 56.25 | 92.50 | 55.00 | |

| HRNet-W48 | 256×192 | 48.54 | 84.91 | 44.25 | 55.50 | 87.50 | 57.50 | |

| HRNet-W48 | 384×288 | 52.96 | 91.18 | 52.67 | 59.50 | 92.50 | 62.50 |

Fig. 7 Visualization results of different methods on ornamental fish data |