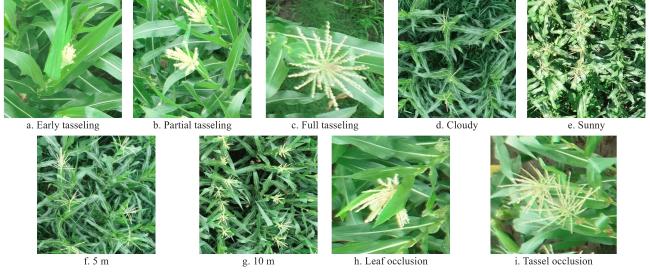

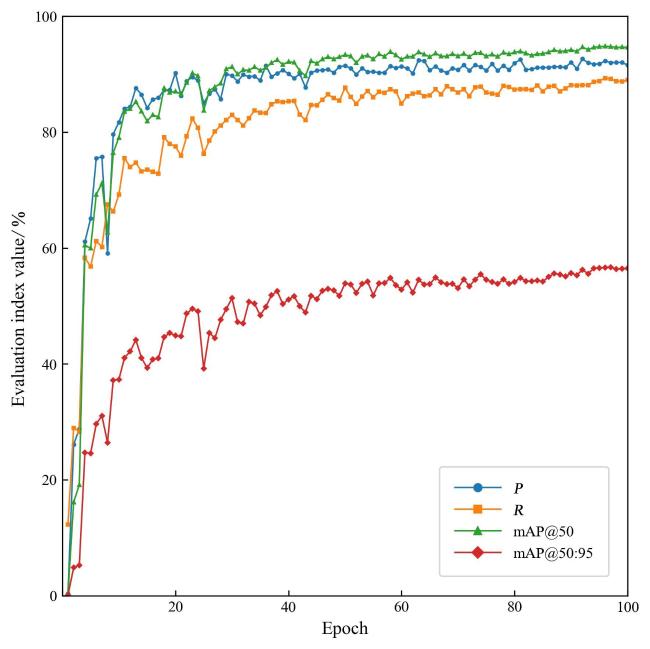

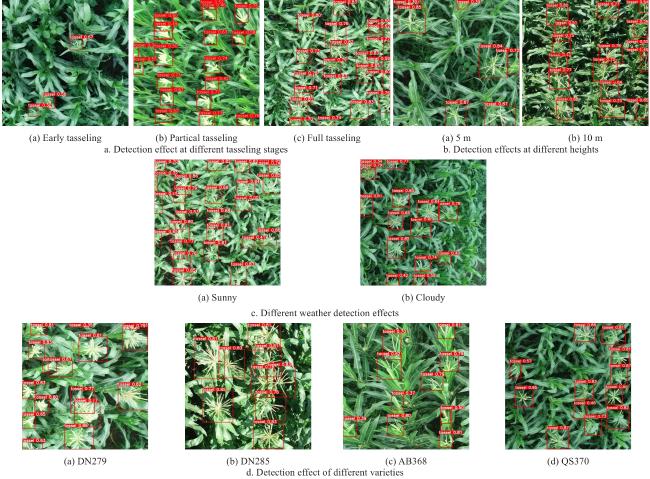

The results showed that detection performed best at the full flowering stage (

P = 91.9%,

R = 88.9%, AP@0.5 = 93.5%, AP@0.5:0.95 = 57.2%), followed by the partial tasseling stage, while the early tasseling stage achieved the lowest accuracy (

P = 88.4%,

R = 79.1%, AP@0.5 = 89.0%, AP@0.5:0.95 = 50.4%). The phenotypic differences of maize tassels at various growth stages led to these detection disparities, as shown in

Fig. 9a. Because during early tasseling, the tassels were relatively small, and after processing the UAV images, the model's feature extraction ability was limited, resulting in poorer detection performance. As the tassels develop and fully flower, their color and phenotypic features became more prominent, which facilitated better detection by the model. The detection performance on the 5 m altitude test set was better than that on the 10 m test set, with

P,

R, AP@0.5, and AP@0.5:0.95 higher by 1.0, 1.7, 2.0, and 0.6 percentage points, respectively.

Fig. 9b suggests that this may be due to image resolution decreasing as the UAV collection height increases, causing tassel features to be less distinct and thereby reducing detection accuracy. Detection under cloudy conditions outperformed sunny conditions. As shown in

Fig. 9c, strong natural sunlight on sunny days caused severe reflections on maize leaves, resulting in overexposed images and blurred boundaries between tassels and leaves, making it difficult to distinguish tassels. Under cloudy conditions, the overall image was darker, and the tassels and leaves reflected light differently, making it easier for the model to distinguish tassels from the complex background. Thus, cloudy weather was more conducive to detection. Among different varieties, DN279 achieved the best detection results (

P = 91.9%,

R = 88.0%, AP@0.5 = 93.6%, AP@0.5:0.95 = 56.5%), followed by DN285, while AB368 performed relatively worse (

P = 84.8%,

R = 82.1%, AP@0.5 = 86.6%, AP@0.5:0.95 = 52.7%). This likely stemmed from DN279 and DN285 being part of the training dataset of the LightTassel-YOLO model, enabling stronger feature extraction capabilities for these varieties. In contrast, AB368 and QS370 were not included in training and were only used for testing, thus showing more significant phenotypic differences and lower detection performance. Additionally,

Fig. 9d illustrated that DN279 tassels were more concentrated, morphologically clear, and had deeper coloration that contrasts distinctly with surrounding leaves, allowing the model to more accurately identify tassel locations and boundaries. This variety demonstrated strong robustness and precision during detection. DN285's tassels had a more branched and slender morphology, with denser leaved causing some occlusions between tassels and between tassels and leaves; however, the model still accurately detected most occluded tassels. AB368 showed relatively weaker detection results due to its slender tassel branches and low color contrast with leaves, which reduced the model's discrimination capability. Some tassels had complex shapes that increased detection difficulty, resulting in generally lower accuracy. QS370's detection results fall between DN285 and AB368. Its tassels were more scattered with weaker features, leading to lower confidence scores for some targets. Furthermore, the thin branches were more easily occluded by the complex background, affecting detection completeness. Overall, these findings indicated that the morphological traits of maize tassels and background complexity both impact the detection performance of LightTassel-YOLO. Nonetheless, the model still achieved favorable results across diverse test conditions, demonstrating strong robustness and adaptability.