Smart Agriculture ›› 2026, Vol. 8 ›› Issue (1): 120-147.doi: 10.12133/j.smartag.SA202507028

• Overview Article • Previous Articles Next Articles

DAI Weijiao1( ), LIANG Yudongchen1(

), LIANG Yudongchen1( ), ZHOU Yong2, YAO Chao1, ZHANG Cheng1,3, SONG Yongjian4, LI Guoliang1, TIAN Fang1,3(

), ZHOU Yong2, YAO Chao1, ZHANG Cheng1,3, SONG Yongjian4, LI Guoliang1, TIAN Fang1,3( )

)

Received:2025-07-28

Online:2026-01-30

Foundation items:甘肃省现代农业产业技术体系建设专项(GSARS02)

About author:梁禹东辰,硕士研究生,研究方向为计算机视觉。E-mail:YudongchenLiang@webmail.hzau.edu.cn LIANG Yudongchen, E-mail: YudongchenLiang@webmail.hzau.edu.cn.

corresponding author:

CLC Number:

DAI Weijiao, LIANG Yudongchen, ZHOU Yong, YAO Chao, ZHANG Cheng, SONG Yongjian, LI Guoliang, TIAN Fang. Advances and Prospects in Body-Size Measurement of Sheep: From 2D Vision to 3D Reconstruction and 2D-3D Fusion[J]. Smart Agriculture, 2026, 8(1): 120-147.

Add to citation manager EndNote|Ris|BibTeX

URL: https://www.smartag.net.cn/EN/10.12133/j.smartag.SA202507028

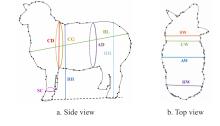

Fig. 1

Sheep body measurement diagram Note: BL denotes body length; BH denotes body height; CD denotes chest depth; CG denotes chest girth; AD denotes abdominal dimension; HH denotes hip height; SC denotes shank circumference; SW denotes shoulder width; CW denotes chest width; AW denotes abdominal width; HW denotes hip width.

Fig. 2

Overview diagram of sheep body measurement techniques Note: SLIC denotes simple linear iterative clustering; R-CNN denotes region-based convolutional neural network; ICP denotes iterative closest point; SLAC denotes simultaneous localization and calibration; Lite-HRNet denotes lite high-resolution network; SIFT denotes scale-invariant feature transform; SVM denotes support vector machine. ROR denotes radius outlier removal; ISS3D denotes intrinsic shape signatures 3D; 4PCS denotes 4-points congruent sets; KCGATNet denotes kernel-based channel graph attention transformer network.

Table 1

image-based body size measurement methods: applications in sheep and reference cases in livestock

| Subjects | Camera setup | Image algorithms | Individual number | Traits & accuracy | Main limitations | Year | Work |

|---|---|---|---|---|---|---|---|

| Sheep | Logitech C920 hdpro webcam | Partial least squares algorithm | 27 | BL: 3.5%; BH: 3.5%; CD: 3.5% | Colour-contrast sensitive | 2014 | [ |

| Sheep | CCD wide-angle camera | Background difference method | NA | BL: 2%; BH: 2% | Cluttered background leaks | 2014 | [ |

| Sheep | MV-EM120C | SLIC + FCM clustering | 27 | BL: 2.03%; BH: 1.13%; CD: 4.45%; CW: 2.25%; HH: 1.54%; HW: 2.41% | Cluster number must be tuned | 2018 | [ |

| Pig | Kinect v1 (RGB) | Semi-global matching + image subtraction | 200 | BL, BH, HH, HW, SW: < 2.83 % | Depth not used; Lighting sensitive | 2019 | [ |

| Cow | Single RGB | Canny algorithm | NA | BL: 0.06%; BH: 2.28% | Edge gaps need manual repair | 2020 | [ |

| Yak | Single RGB | Sobel operator + SLIC | 33 | BH: 1.95%; BL: 3.11%; CD: 4.91% | K-value manually tuned | 2021 | [ |

| Cattle | Azure Kinect DK | Shadow aberration method + Watershed segmentation | 34 | BL: 2.7 cm; BH: 2.07 cm; AW: 1.47 cm | High-contrast uniform background required | 2022 | [ |

| Sheep | Single RGB | PreciseEdge | 75 | BL: r=0.943; BH: r=0.931; CG: r=0.893 | High-contrast uniform background required | 2022 | [ |

| Sheep, Cattle | Intel RealSense D415 | U-Net | 55 | BL: 2.42 %; BH: 1.86 %; HH: 2.07 %; CD: 2.72 % | Heavy model, embedded-unfriendly | 2022 | [ |

| Cattle | Hikvision RGB | SOLOv2 | 200 | BL: 1.36 %; BH: 0.44 %; CD: 2.05 %; CW: 2.80 % | Extreme-pose drift | 2022 | [ |

| Cattle | Smartphone RGB | YOLOv5s+Lite-HRNet | 30 | BL: 7.55%; BH: 6.75%; CD: 8.00%; SC: 8.97% | Key-point occlusion drift | 2024 | [ |

| Cattle | RealSense D455 | Improved Mask2former | 137 | BH: 4.32%; HH: 3.71%; BL: 5.58%; SC: 6.25% | High posture sensitivity, large errors in girth measurements | 2024 | [ |

Table 2

Comparison of geometric feature extraction methods for sheep body size measurement

| Subjects | Method | Principle | Time complexity | Advantage | Disadvantage | Work |

|---|---|---|---|---|---|---|

| Sheep | Corner (inflection-point) detection | Compute curvature or angle discontinuities along the contour | O(n) | Very fast; accurate localization | Noise-sensitive | [ |

| Sheep | Convex-hull analysis | Compute the convex hull of a point set and retain its vertices | O(n log n) | Parameter-free; fast execution | Keeps only "most convex" points; loses concavities | [ |

| Sheep | Edge detection | Detect gray-level discontinuities via gradient/entropy/filtering | O(n) | Highly general-purpose | Fragile edges; post-processing required | [ |

| Sheep | Maximum-curvature extraction | Locate curvature extrema along the edge | O(n) | Mathematically well-defined | Requires smoothing; prone to burrs | [ |

| Sheep | Region-based U-chord curvature | Compute curvature with a fixed-chord sliding window | O(n) | Balances local & global shape | Extra chord-length parameter to tune | [ |

Table 3

Comparison of deep learning-based keypoint detection methods for sheep and related livestock body size measurement

| Subjects | Method | Principle | Advantage | Disadvantage | Work |

|---|---|---|---|---|---|

| Sheep, Cattle | Multi-stage dense hourglass | Multi-scale fusion with iterative up/down-sampling for end-to-end key-point detection | Highest localization accuracy and strong robustness to pose and coat variations | Large parameter count, high GPU memory usage, slow inference speed | [ |

| Cattle | YOLOv5s +Lite high-resolution network(Lite-HRNet) | YOLOv5s proposes regions; Lite-HRNet refines keypoints | Excellent speed-accuracy trade-off, lightweight design, and suitable for edge deployment | Relies on detection box quality; key points tend to drift under dense occlusion | [ |

| Pig | Faster R-CNN +Neural Architecture Search (NAS) | Faster R-CNN defines the search region; NAS refines the keypoints | High detection accuracy and strong generalizability | Slowest inference speed, difficult to meet real-time requirements, and long training and hyper-parameter tuning cycles | [ |

Table 4

Comparison of 3D point cloud methods for sheep and related livestock body size measurement

| Subjects | Camera type | Algorithms | Animal Numbers | Body traits & accuracy | Year | Work |

|---|---|---|---|---|---|---|

| Sheep | REVscan_3D | Octary tree+ Delaunay triangulation | 1 | BL / BH / CW / HH / HW: 1.01 % | 2019 | [ |

| Cow | Binocular Vision | Scale-invariant feature transform(SIFT) Algorithms | 20 | BL:1.14%; BH:1.57%; SW:2.24% | 2020 | [ |

| Sheep | TOF 3D camera | Principal component analysis Random sampling consistency algorithm Improved regional growth method | 1 | BL / BH / CD / HH: 2.36 % | 2020 | [ |

| Cattle | Kinect v2 | Background subtraction, ROR Algorithms, Iterative Closest Point (ICP), ISS3D | 103 | BL / BH / CD / HH: <3 % | 2020 | [ |

| Pig | Intel RealSense D720 | Depthwise Separable Convolution | 239 | BL: 0.75 cm; HW: 0.38 cm; BH: 1.23 cm; SW: 0.33 cm; HH: 0.66 cm | 2021 | [ |

| Cattle | Kinect DK | Five-point clustering gradient boundary recognition algorithm | 10 | BL: 2.3%; BH: 2.8%; CG: 2.6%; AD: 2.8%; SW: 1.6% | 2022 | [ |

| Pig | Kinect v2 | Variance classification algorithms | 50 | BL: 0.7%; BH: 1.8%; SW: 3.3% | 2022 | [ |

| Pig | RealSense L515 | DeepLabCut+EfficientNet-b6 | 12 | BL / BH / HH / HW / SW: 1.79 cm | 2023 | [ |

| Pig | NA | Improved PointNet++ | 25 | BL: 2.57%; BH: 2.18%; HH: 2.28%; SW: 4.56%; CG: 2.50%; AD: 3.14% | 2023 | [ |

| Sheep | Kinect v2 | ICP,pass-through filtering, statistical filtering, RANSAC | 2 | BL / BH / HH / HW: <5 % | 2024 | [ |

| Sheep | MV-EM120C GigE | YOLOv11n-Pose, CNN, ElasticNet | 51 | BL: 3.11%; BH: 1.93%; CW: 3.38%; CD: 2.52% | 2025 | [ |

| Sheep | KinectV2 | PointNet++ | 24 | BH: 1.67%; CW: 3.63%;HH: 1.14%; BL: 2.71%; CG: 3.57%; HW: 3.71% | 2025 | [ |

| Sheep | Kinect DK | Improved PointNet++, CPD | 120 | BL: 3.34%; BH: 3.07%; HH: 3.32%; CD: 3.63%; CG: 2.81% | 2025 | [ |

Table 5

Comparison of key-point methods based on artificially constructed geometric features of sheep study

| Subjects | Method | Principle | Advantages | Limitations | Work |

|---|---|---|---|---|---|

| Sheep | Normal-vector and curvature fusion simplification algorithm | Simplify point cloud by combining normal vectors and curvature, automatically extract body measurement points on cow back | Preserves key feature points, offers high extraction accuracy, and adapts to various postures | Relies heavily on point cloud quality and pre-processing, high computational complexity | [ |

| Sheep | Spatial distribution statistical method | Based on spatial distribution features of point cloud, set thresholds through pass through filtering, combined with extremum method and dimensionality reduction projection to locate measurement points | High computational-efficiency, real-time-capability, robust-to wool-thickness-interference | Dependent-on fixed-postures and prior spatial-proportion-knowledge, limited breed/growth-stage adaptability, sensitive-to outlier point clouds | [ |

Table 6

Comparison of sheep pose normalization methods

| Subjects | Method | Pose-handling strategy | Advantages | Limitations | Work |

|---|---|---|---|---|---|

| Sheep | Global-local non-rigid warping | Use dynamic position encoding with a similarity transformation group to obtain the pose, followed by template alignment | No reliance on strict symmetry or complete point clouds, enabling non-rigid pose recovery | Heavy computation; template library required | [ |

| Sheep | CNN micro-pose classification + ElasticNet regression | An error-correction model based on CNN micro-pose classification and ElasticNet regression | Lightweight deployment | Still sensitive to resolution/angle; extreme poses fail | [ |

| Sheep | PointNet++ region segmentation and re-projected coordinate frame | Multi-view local pose normalization, PointNet++ segmentation, re-projected coordinate frame | Suppresses drifting landmarks under sudden poses | Relies solely on geometric coordinates without fusing RGB or other modalities, resulting in limited discriminative power under occlusion or specular reflection | [ |

Table 7

The relationship between body weight and body size of sheep

| Animal numbers | Body size indicators | Number of indicators | Year | Work |

|---|---|---|---|---|

| 882 | BL, BH, CG, SC, HH, HW, etc. | 7 | 2018 | [ |

| 94 | BL, BH, CG, SC | 4 | 2018 | [ |

| 50 | BL, BH, CD, CG, CW, SC, HW, etc. | 9 | 2019 | [ |

| 706 | BL, BH, CG, SC | 4 | 2020 | [ |

| 145 | BL, BH, CD, CG, CW, HW | 6 | 2020 | [ |

| 32 | BL, BH, CD, CG, CW, SC | 6 | 2021 | [ |

| 136 | BL, BH, CD, CG, CW, SC, SH, HW | 8 | 2021 | [ |

| 745 | BL, BH, CD, CG, CW, SC, HH | 7 | 2021 | [ |

| 653 | BL, BH, CD, CG, CW, HW | 6 | 2021 | [ |

| 408 | BL, BL, BH, CD, CG, CW, SC, etc. | 10 | 2022 | [ |

| 558 | BL, BH, CG | 3 | 2022 | [ |

| 12 500 | BL, BH, CD, CG, CW, SC, HH, BW, etc. | 13 | 2022 | [ |

| 507 | BL, BH, CG, etc. | 5 | 2022 | [ |

| 56 | BL, BH, CG, SC, HH, HW | 6 | 2022 | [ |

| 100 | BL, CG, HH, SH | 4 | 2022 | [ |

| 334 | BL, BH, CG, SC | 4 | 2023 | [ |

| 1 289 | BL, BH, CD, CG, CW, SC | 6 | 2023 | [ |

| 150 | BL, CG, SH | 3 | 2023 | [ |

| 210 | BL, CD, CG, CW, HH, BH、etc. | 7 | 2023 | [ |

| 239 | BL, BH, CD, CG, CW, etc. | 6 | 2024 | [ |

| 916 | BL, BH, CG, SC | 4 | 2024 | [ |

| 239 | BL, BH, CG, CW, SC, etc. | 6 | 2024 | [ |

| 100 | BL, CG, HH, HW, AD, SW, etc. | 14 | 2024 | [ |

Table 8

Accuracy data comparison of 2D, 3D, and 2D-3D body measurement methods in sheep and other livestock

| Subjects | Methods | Animal numbers | Body traits & accuracy | Year | Work |

|---|---|---|---|---|---|

| Sheep | 2D | 27 | BL: 2.03%; BH: 1.13%; CD: 4.45%; CW: 2.25%; HH:1.54%; HW: 2.41% | 2018 | [ |

| Cattle | 2D | NA | BL: 0.06%; BH: 2.28% | 2020 | [ |

| Sheep | 2D | 55 | BL: 2.42 %; BH: 1.86 %; HH: 2.07 %; CD: 2.72 % | 2022 | [ |

| Cattle | 2D | 30 | BL: 7.55%; BH: 6.75%; CD: 8.00%; SC: 8.97% | 2024 | [ |

| Sheep | 3D | 1 | BL / BH / CD / HH: 2.36% | 2020 | [ |

| Cattle | 3D | 103 | BL/BH/CDP/HH: <3% | 2020 | [ |

| Sheep | 3D | 239 | BL: 0.75 cm; HW: 0.38 cm; BH: 1.23 cm; SW: 0.33 cm; HH: 0.66 cm | 2021 | [ |

| Cattle | 3D | 10 | BL: 2.3%; BH: 2.8%; CDM: 2.6%; AD: 2.8%; SW: 1.6% | 2022 | [ |

| Sheep | 3D | 2 | BOL/BH/HH/HW: <5% | 2024 | [ |

| Sheep | 3D | 24 | BH: 1.67%; CW: 3.63%; HH: 1.14%; BL: 2.71%; CDM: 3.57%; HW: 3.71% | 2025 | [ |

| Cattle | 2D-3D | NA | BL: 2.14%; BH: 0.76%; HH: 0.76% | 2022 | [ |

| Pig | 2D-3D | NA | BL: 2.33%; BH: 1.92%; SW: 1.29%; HW: 1.26% | 2021 | [ |

| Horse | 2D-3D | 80 | BH/BL/HH/CG/AD: <2.29% | 2024 | [ |

| [1] |

|

| [2] |

|

| [3] |

|

| [4] |

|

| [5] |

|

| [6] |

|

| [7] |

|

| [8] |

|

| [9] |

|

| [10] |

|

| [11] |

|

| [12] |

|

| [13] |

|

| [14] |

|

| [15] |

|

| [16] |

|

| [17] |

|

| [18] |

|

| [19] |

|

| [20] |

|

| [21] |

|

| [22] |

|

| [23] |

|

| [24] |

|

| [25] |

|

| [26] |

|

| [27] |

|

| [28] |

|

| [29] |

|

| [30] |

|

| [31] |

|

| [32] |

|

| [33] |

|

| [34] |

|

| [35] |

|

| [36] |

|

| [37] |

|

| [38] |

|

| [39] |

|

| [40] |

|

| [41] |

|

| [42] |

|

| [43] |

|

| [44] |

|

| [45] |

|

| [46] |

|

| [47] |

|

| [48] |

|

| [49] |

|

| [50] |

|

| [51] |

|

| [52] |

|

| [53] |

|

| [54] |

|

| [55] |

|

| [56] |

|

| [57] |

|

| [58] |

|

| [59] |

|

| [60] |

|

| [61] |

|

| [62] |

|

| [63] |

|

| [64] |

|

| [65] |

|

| [66] |

|

| [67] |

|

| [68] |

|

| [69] |

|

| [70] |

|

| [71] |

|

| [72] |

|

| [73] |

|

| [74] |

|

| [75] |

|

| [76] |

|

| [77] |

|

| [78] |

|

| [79] |

|

| [80] |

|

| [81] |

|

| [82] |

|

| [83] |

|

| [84] |

|

| [85] |

|

| [86] |

|

| [87] |

|

| [88] |

|

| [89] |

|

| [90] |

|

| [91] |

|

| [92] |

|

| [93] |

|

| [94] |

|

| [1] | YANG Qilang, YU Lu, LIANG Jiaping. Grading Asparagus officinalis L. Using Improved YOLOv11 [J]. Smart Agriculture, 2025, 7(4): 84-94. |

| [2] | PENG Qiujun, LI Weiran, LIU Yeqiang, LI Zhenbo. High-Precision Fish Pose Estimation Method Based on Improved HRNet [J]. Smart Agriculture, 2025, 7(3): 160-172. |

| [3] | ZHANG Zhiyong, CAO Shanshan, KONG Fantao, LIU Jifang, SUN Wei. Advances, Problems and Challenges of Precise Estrus Perception and Intelligent Identification Technology for Cows [J]. Smart Agriculture, 2025, 7(3): 48-68. |

| [4] | QIAO Lei, CHEN Lei, YUAN Yuan. Bi-Intentional Modeling and Knowledge Graph Diffusion for Rice Variety Selection and Breeding Recommendation [J]. Smart Agriculture, 2025, 7(2): 73-80. |

| [5] | MA Weiwei, CHEN Yue, WANG Yongmei. Recognition of Sugarcane Leaf Diseases in Complex Backgrounds Based on Deep Network Ensembles [J]. Smart Agriculture, 2025, 7(1): 136-145. |

| [6] | CAO Bingxue, LI Hongfei, ZHAO Chunjiang, LI Jin. The Path of Smart Agricultural Technology Innovation Leading Development of Agricultural New Quality Productivity [J]. Smart Agriculture, 2024, 6(4): 116-127. |

| [7] | FAN Mingshuo, ZHOU Ping, LI Miao, LI Hualong, LIU Xianwang, MA Zhirun. Automatic Navigation and Spraying Robot in Sheep Farm [J]. Smart Agriculture, 2024, 6(4): 103-115. |

| [8] | LI Minghuang, SU Lide, ZHANG Yong, ZONG Zheying, ZHANG Shun. Automatic Measurement of Mongolian Horse Body Based on Improved YOLOv8n-pose and 3D Point Cloud Analysis [J]. Smart Agriculture, 2024, 6(4): 91-102. |

| [9] | XU Ruifeng, WANG Yaohua, DING Wenyong, YU Junqi, YAN Maocang, CHEN Chen. Shrimp Diseases Detection Method Based on Improved YOLOv8 and Multiple Features [J]. Smart Agriculture, 2024, 6(2): 62-71. |

| [10] | SHU Hongwei, WANG Yuwei, RAO Yuan, ZHU Haojie, HOU Wenhui, WANG Tan. Imaging System for Plant Photosynthetic Phenotypes Incorporating Three-dimensional Structured Light and Chlorophyll Fluorescence [J]. Smart Agriculture, 2024, 6(1): 63-75. |

| [11] | ZUO Haoxuan, HUANG Qicheng, YANG Jiahao, MENG Fanjia, LI Sien, LI Li. In Situ Identification Method of Maize Stalk Width Based on Binocular Vision and Improved YOLOv8 [J]. Smart Agriculture, 2023, 5(3): 86-95. |

| [12] | LIU Youfu, XIAO Deqin, ZHOU Jiaxin, BIAN Zhiyi, ZHAO Shengqiu, HUANG Yigui, WANG Wence. Status Quo of Waterfowl Intelligent Farming Research Review and Development Trend Analysis [J]. Smart Agriculture, 2023, 5(1): 99-110. |

| [13] | KANG Xi, LIU Gang, CHU Mengyuan, LI Qian, WANG Yanchao. Advances and Challenges in Physiological Parameters Monitoring and Diseases Diagnosing of Dairy Cows Based on Computer Vision [J]. Smart Agriculture, 2022, 4(2): 1-18. |

| [14] | Zhou Chengquan, Ye Hongbao, Yu Guohong, Hu Jun, Xu Zhifu. A fast extraction method of broccoli phenotype based on machine vision and deep learning [J]. Smart Agriculture, 2020, 2(1): 121-132. |

| Viewed | ||||||

|

Full text |

|

|||||

|

Abstract |

|

|||||